You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Normalization

- Thread starter dbacdog

- Start date

TomBascom

Curmudgeon

What do you mean by "redundant data"? That covers a multitude of sins.

One common problem that fits the description is "redundant indexes". It is pretty common for applications to have lots of indexes defined and for a good number of them to be subsets of others. Which often means that they are never used, wasting space and hurting performance (because they have to be updated even though they are never used). Is that sort of thing your concern?

It is also common for certain fields to be duplicated in multiple tables. Often with slightly different names. Frequently with poor synchronization of supposedly synchronized values. Are you looking for that kind of problem?

Or maybe you're concerned that the same value is being populated many times? Like 113 slightly different customer records for "Progress Software"?

If you are primarily concerned with normalization, the relationships between tables and the fields that those tables contain then you need some tooling to help you with db design. I have heard that PDSOE might have something along those lines. But I have not tried to use it. There are also lots of 3rd party options. Naturally they all assume you are running SQL database so that will result in some "challenges".

None of that would care if you have 1 user, 2,000 users, or 10,000 users. So if the user count is a significant tidbit you must be thinking of something else. I don't know what that would be though.

Ditto HPUX. That doesn't seem like it would be relevant. But if it is you should explain how.

One common problem that fits the description is "redundant indexes". It is pretty common for applications to have lots of indexes defined and for a good number of them to be subsets of others. Which often means that they are never used, wasting space and hurting performance (because they have to be updated even though they are never used). Is that sort of thing your concern?

It is also common for certain fields to be duplicated in multiple tables. Often with slightly different names. Frequently with poor synchronization of supposedly synchronized values. Are you looking for that kind of problem?

Or maybe you're concerned that the same value is being populated many times? Like 113 slightly different customer records for "Progress Software"?

If you are primarily concerned with normalization, the relationships between tables and the fields that those tables contain then you need some tooling to help you with db design. I have heard that PDSOE might have something along those lines. But I have not tried to use it. There are also lots of 3rd party options. Naturally they all assume you are running SQL database so that will result in some "challenges".

None of that would care if you have 1 user, 2,000 users, or 10,000 users. So if the user count is a significant tidbit you must be thinking of something else. I don't know what that would be though.

Ditto HPUX. That doesn't seem like it would be relevant. But if it is you should explain how.

I know user count or OS does not matter in this post. Just a habit.

Over the last 25 years we have added lots of tables, indexes, and modified over 5,000 programs. I want to know if our database is normalize or not. From a front end perspective, I don't know if users are inputting the same data more then once or twice, etc... I see tables that have the same data, however, does not mean it is a bad thing,......I just thought maybe there is a tool, or some other way to see if our database is good.

Over the last 25 years we have added lots of tables, indexes, and modified over 5,000 programs. I want to know if our database is normalize or not. From a front end perspective, I don't know if users are inputting the same data more then once or twice, etc... I see tables that have the same data, however, does not mean it is a bad thing,......I just thought maybe there is a tool, or some other way to see if our database is good.

Hello,

Going to piggyback on this conversation.

Our department is undergoing some much needed overhaul. Our shop has had a lot of chefs in the kitchen, if you will, with all access to creating tables, adding fields, indexes and whatever else. We are seeing some slowing in performance despite a fairly recent upgrade on the box the server is sitting on. We are looking to get a good comprehensive look at what we have at this moment so that we can best map how to fix it and move forward.

Is there a specific tool in Progress that might show what our current table relationships are? Perhaps a 3rd party vendor that has one specifically for Progress? The ones I have come across so far in my search have been for SQL. Also, is there something that can show how to best achieve normalization for our existing database and its current table relationships?

Going to piggyback on this conversation.

Our department is undergoing some much needed overhaul. Our shop has had a lot of chefs in the kitchen, if you will, with all access to creating tables, adding fields, indexes and whatever else. We are seeing some slowing in performance despite a fairly recent upgrade on the box the server is sitting on. We are looking to get a good comprehensive look at what we have at this moment so that we can best map how to fix it and move forward.

Is there a specific tool in Progress that might show what our current table relationships are? Perhaps a 3rd party vendor that has one specifically for Progress? The ones I have come across so far in my search have been for SQL. Also, is there something that can show how to best achieve normalization for our existing database and its current table relationships?

TomBascom

Curmudgeon

Progress table relationships are by polite convention. And everyone has their own idea what that convention should be. There is no formal method to define and enforce them. A common (but far from universal) convention is to use the same field names in related tables in order to indicate relationships.

As time goes by and various people get involved these conventions tend to drift. Especially if documentation and coding standards are weak (or also drifting).

Unless you are very lucky you probably won’t find find a tool that will do it all but you might find something that provides some help. First I would spend a bit of time manually looking in to it and talking to people. Especially “old hands”. That might help to identify patterns which, in turn, would help you to evaluate which tools might help.

As for performance issues... Progress OpenEdge Monitoring with ProTop is your friend. And you should open a new thread to discuss those if you’d like.

As time goes by and various people get involved these conventions tend to drift. Especially if documentation and coding standards are weak (or also drifting).

Unless you are very lucky you probably won’t find find a tool that will do it all but you might find something that provides some help. First I would spend a bit of time manually looking in to it and talking to people. Especially “old hands”. That might help to identify patterns which, in turn, would help you to evaluate which tools might help.

As for performance issues... Progress OpenEdge Monitoring with ProTop is your friend. And you should open a new thread to discuss those if you’d like.

Rob Fitzpatrick

ProgressTalk.com Sponsor

Yes, though it is limited. There is a "Table Relations" report in the data dictionary. It assumes that fields are named the same across tables. So if you have a "custnum" field in table A and a child table B with the same data in a "cust-num" or "B-custnum" field, that tool won't report on that table relation. Progress doesn't have a way of explicitly expressing table relationships in the schema, so if you haven't been strict about field naming then your only resources for finding relationships will be looking at your internal documentation or talking to the developers who understand your data model.Is there a specific tool in Progress that might show what our current table relationships are?

This may be obvious but an important first step in getting out of a hole is to stop digging. You described a system where lots of people have made schema changes. It should be high on your priority list to create a new system, where all proposed schema changes are centrally reviewed and vetted against a written set of design criteria. Reviewing such changes at the time of code review is too late. It can be challenging but in order to solve this problem the culture and business processes need to change and you need management buy-in to make it possible.

As for "slowing in performance", that may or may not be related to your "lots of chefs in the kitchen" problem. Without a lot more detail there is no way to know. If you want to pursue that aspect further, please start a new thread as that is unrelated to normalization.

tamhas

ProgressTalk.com Sponsor

Perhaps all present know this, but I think it is worth saying explicitly ... some databases have table relationships expressly specified in the data structure of the database, but Progress does not. Some packages have naming conventions like those referred to above, sometimes complicated by a prefix or some such, but even if present there is no guarantee that all such relationships will be actually detectable. Your only sure guide is the code. Indeed, you may find that there are empirical relationships in the code which are not represented in the data structure as well as apparent relationships in the data structure that are not actually used in the code. It is possible to detect these relationships from the output of COMPILE.

JOSE_LUIS_VEGA

Member

Hello dbacdog:I know user count or OS does not matter in this post. Just a habit.

Over the last 25 years we have added lots of tables, indexes, and modified over 5,000 programs. I want to know if our database is normalize or not. From a front end perspective, I don't know if users are inputting the same data more then once or twice, etc... I see tables that have the same data, however, does not mean it is a bad thing,......I just thought maybe there is a tool, or some other way to see if our database is good.

I think what you need is a deep analysis of the architecture of the database, I recommend using ERwin, it gives you a very complete graphical vision of the database. Also with so many tables, it would be good to see if it can be split into more than one database, based on the nature of the data, I hope it helps you. I have 30 years of experience in highly complex systems.

JOSE_LUIS_VEGA

Member

If you don't have ERwin, you can do: Data Dictionary / Reports / Table Relations / All Tables. Although this will take a long time, verify that indexes are too many or access the same data.I know user count or OS does not matter in this post. Just a habit.

Over the last 25 years we have added lots of tables, indexes, and modified over 5,000 programs. I want to know if our database is normalize or not. From a front end perspective, I don't know if users are inputting the same data more then once or twice, etc... I see tables that have the same data, however, does not mean it is a bad thing,......I just thought maybe there is a tool, or some other way to see if our database is good.

Rob Fitzpatrick

ProgressTalk.com Sponsor

Why?Also with so many tables, it would be good to see if it can be split into more than one database

JOSE_LUIS_VEGA

Member

Because that significantly improves performance, according to a system, it groups tables according to the subject matter, so we can have: treasury, accounting, distribution and logistics modules, suppliers, clients, etc. If it is not ordered, it can become a mess, both for modification and maintenance.Why?

Rob Fitzpatrick

ProgressTalk.com Sponsor

I understand the appeal of treating databases like containers so you can mentally compartmentalize components by their business function. But I can't agree with a blanket statement that it "significantly improves performance"; particularly when you're talking about someone else's application that I have to assume you have no prior knowledge of.

Let's imagine we take an application with the functional areas you describe and we split it into n databases, where n=5 (or more?). That in itself is a project that involves time, risk, and cost. Assuming you do that successfully, what is the future ongoing impact on operational cost and risk management?

You now have:

Let's imagine we take an application with the functional areas you describe and we split it into n databases, where n=5 (or more?). That in itself is a project that involves time, risk, and cost. Assuming you do that successfully, what is the future ongoing impact on operational cost and risk management?

You now have:

- n database backups to complete and verify each night, rather than one;

- n sets of AI files to monitor, rather than one;

- n warm spare or replica databases to maintain and monitor, rather than one;

- n sets of TCP ports to allocate, per broker, rather than one;

- n times more database processes (brokers, servers, page writers, utilities, monitors, etc.), consuming more CPU cycles and memory on the same hardware;

- n times more periodic maintenance tasks to do (e.g. index compact or rebuild, SQL statistics updates, dump and load, repair, schema update, structure update, policy updates, user management, audits, etc.);

- the possibility that a business transaction may complete in an inconsistent manner because it involves tables from different databases and therefore different database transactions that could complete or roll back independently;

- the possibility that in a disaster recovery scenario, you have valid backups and AI files for n-x of your databases but not for the other x databases. Then what? Will the databases restored to more recent points in time then have orphan records that are inaccessible? Or point to child records that don't exist in the other databases? Did the application developers plan for this? Are the failure modes understood?

- n times as many status and performance metrics to monitor; e.g. buffer hit ratio, logical to physical ratio, transaction rates, undo rates, long-running transactions, BI size and growth rate, latch waits, resource waits, read rates, space management, page writer performance, the list goes on and on.

- a fixed pool of memory split n ways; buffer pools, AI and BI buffers, caches for TDE, OM, CDC and more. What split is best for application performance? Will that always be the best split? Are you giving suppliersdb much more cache than it needs and starving clientsdb because it doesn't have enough? That's bad for performance. So you have to monitor, tune, restart both, and continue to monitor. But what if the next version of the application changes the I/O profile and now there is much less read activity in suppliersdb but much more in accountingdb, so again you are hurting performance. You could spend a lot of time chasing these problems you have created, rather than let a single database cache what it needs to, as the application, the business patterns, or the user behaviour change over time.

- There are probably lots of other downsides to doing this, but the above is what I came up with offhand.

The multi-database approach, especially when applied to an existing single-database application, sounds more like a "mess" to me, however aesthetically pleasing it might seem to a developer. And it is not clear to me why a single large database should be assumed to be "not ordered".If it is not ordered, it can become a mess, both for modification and maintenance.

JOSE_LUIS_VEGA

Member

My vision is that of a developer, if you need to do an audit of systems, it is clearer to face this by modules, this separates tables based on the subject they deal with, while some programmers are using another model of the database and do not have to leave , so that they modify the entire database. This is real and I know, we did it in highly complex systems.I understand the appeal of treating databases like containers so you can mentally compartmentalize components by their business function. But I can't agree with a blanket statement that it "significantly improves performance"; particularly when you're talking about someone else's application that I have to assume you have no prior knowledge of.

Let's imagine we take an application with the functional areas you describe and we split it into n databases, where n=5 (or more?). That in itself is a project that involves time, risk, and cost. Assuming you do that successfully, what is the future ongoing impact on operational cost and risk management?

You now have:

The above is not to say that there are not multi-database applications that work well and perform well. But the original poster is best served by being able to make an informed decision, weighing costs against benefits. It is not as if splitting one database into many is some silver bullet that magically makes everything better.

- n database backups to complete and verify each night, rather than one;

- n sets of AI files to monitor, rather than one;

- n warm spare or replica databases to maintain and monitor, rather than one;

- n sets of TCP ports to allocate, per broker, rather than one;

- n times more database processes (brokers, servers, page writers, utilities, monitors, etc.), consuming more CPU cycles and memory on the same hardware;

- n times more periodic maintenance tasks to do (e.g. index compact or rebuild, SQL statistics updates, dump and load, repair, schema update, structure update, policy updates, user management, audits, etc.);

- the possibility that a business transaction may complete in an inconsistent manner because it involves tables from different databases and therefore different database transactions that could complete or roll back independently;

- the possibility that in a disaster recovery scenario, you have valid backups and AI files for n-x of your databases but not for the other x databases. Then what? Will the databases restored to more recent points in time then have orphan records that are inaccessible? Or point to child records that don't exist in the other databases? Did the application developers plan for this? Are the failure modes understood?

- n times as many status and performance metrics to monitor; e.g. buffer hit ratio, logical to physical ratio, transaction rates, undo rates, long-running transactions, BI size and growth rate, latch waits, resource waits, read rates, space management, page writer performance, the list goes on and on.

- a fixed pool of memory split n ways; buffer pools, AI and BI buffers, caches for TDE, OM, CDC and more. What split is best for application performance? Will that always be the best split? Are you giving suppliersdb much more cache than it needs and starving clientsdb because it doesn't have enough? That's bad for performance. So you have to monitor, tune, restart both, and continue to monitor. But what if the next version of the application changes the I/O profile and now there is much less read activity in suppliersdb but much more in accountingdb, so again you are hurting performance. You could spend a lot of time chasing these problems you have created, rather than let a single database cache what it needs to, as the application, the business patterns, or the user behaviour change over time.

- There are probably lots of other downsides to doing this, but the above is what I came up with offhand.

The multi-database approach, especially when applied to an existing single-database application, sounds more like a "mess" to me, however aesthetically pleasing it might seem to a developer. And it is not clear to me why a single large database should be assumed to be "not ordered".

JOSE_LUIS_VEGA

Member

That is true, it is my turn as a developer and as an auditor, beyond the technology, if you work well in an orderly way, that will be detected in our project and I develop a methodology that identifies the tables of separated bases, to see if it is not They connect to give notice, it is because they may be indo modified in their structure.Important in this evaluation is that it is highly likely that some transactions will span databases, necessitating two phase commit and the risk of one side of that transaction failing.

TomBascom

Curmudgeon

You could certainly organize things differently at the logical layer than at the physical layer.

Rob is absolutely correct that there are a lot of downsides to needlessly having multiple (physical) databases.

There can be some upsides, particularly if you're doing an old-school multi-tenant implementation where keeping client data separate is a requirement. Or if you have certain hardware constraints making themselves known. But those are fairly rare situations that can be dealt with quite separately from the logical design of the database.

Rob is absolutely correct that there are a lot of downsides to needlessly having multiple (physical) databases.

There can be some upsides, particularly if you're doing an old-school multi-tenant implementation where keeping client data separate is a requirement. Or if you have certain hardware constraints making themselves known. But those are fairly rare situations that can be dealt with quite separately from the logical design of the database.

JOSE_LUIS_VEGA

Member

What you point out is what happens in the environments in which I work, not only in my country, where the old school at the business level makes it very difficult to implement other forms of work. I recently saw bad architecture in a large base in Ukraine on migration, even worse than the gaps I saw here in my midst. It is more try to achieve scripts that control things that are usually left to groups of tasks and I have not been able to make them work due to what you indicate here.You could certainly organize things differently at the logical layer than at the physical layer.

Rob is absolutely correct that there are a lot of downsides to needlessly having multiple (physical) databases.

There can be some upsides, particularly if you're doing an old-school multi-tenant implementation where keeping client data separate is a requirement. Or if you have certain hardware constraints making themselves known. But those are fairly rare situations that can be dealt with quite separately from the logical design of the database.

JOSE_LUIS_VEGA

Member

Surely it is like that, most of the problems are rarely a single thing, I have had to see that the misuse of indexes, coupled with an unclear access, with a very saturated server, sometimes confuses.It is probably worth noting that, if the concern for performance is based on the existing unified database, it is quite likely that something else is the source of the performance issues, rather than it coming from a unified database.

Patrice Perrot

Member

Hi ,

Another point for future (OE12), SSJ will not work for a request on several DB , it could have a huge impact on performance.

Tom and Rob are correct , managing multiple Db could be harder than a single DB.

I am responsible for over 10K hosted databases for my clients, all of them have several DB (more than 15 DB).

There is no "magic parameter" , each DB should have its set of parameter, you should set them individually, (if a memory consumption increase for a DB , you should decrease it on another DB). Tuning thoses parameter is "funny" game ...

It is feasable but it tooks times.

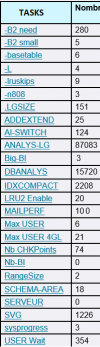

I join a picture of my activity of the previuos week, it is an uncomplete summary, some tasks are missing .

More Db means more time to do the tasks.

Patrice

Another point for future (OE12), SSJ will not work for a request on several DB , it could have a huge impact on performance.

Tom and Rob are correct , managing multiple Db could be harder than a single DB.

I am responsible for over 10K hosted databases for my clients, all of them have several DB (more than 15 DB).

There is no "magic parameter" , each DB should have its set of parameter, you should set them individually, (if a memory consumption increase for a DB , you should decrease it on another DB). Tuning thoses parameter is "funny" game ...

It is feasable but it tooks times.

I join a picture of my activity of the previuos week, it is an uncomplete summary, some tasks are missing .

More Db means more time to do the tasks.

Patrice

Attachments

Similar threads

- Replies

- 3

- Views

- 349

- Replies

- 5

- Views

- 1K

R

- Locked

- Replies

- 0

- Views

- 505

R