Paul Frost

New Member

Morning all,

Were in the process of evaluating a platform switch from HPUX on Itanium chip set to Linux64 on Dell Intel hardware. Ive used readprobe consistently over the last 20 years to compare the read potential of one machine against another and am getting some unexpected results from readprobe against the Linux platform.

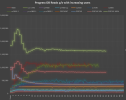

The graph below shows a line for every progress database server we have had back to 2000 when we first started using them.

The red and green lines at the top of the graph are the two main current HP machines we have. The profile of the line produced from these is similar to that of all previous machines tested in that the number of reads increases steeply as each of the cpu’s is utilised (ie one user can only use one cpu whereas two users can use two cpu etc) when all cpu’s have users on them the line flattens out as the processes all vie for cpu time with the sign of a good machine (for db reads anyway) being that the reads don’t drop to far from the max as the number of users ramps up.

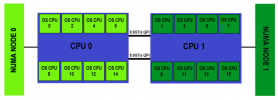

The lilac line starting at 1m reads is the new Dell PER740 and as you can see has the reverse profile which is baffling me a bit. It starts with one user being able to read 1m records per second which is excellent but then nose dives as the number of users increases. Its almost as if whatever component is handling the switching between cpu’s of the processes is the bottle neck and not working in an efficient way. Clearly if it followed the same profile as the others and started at 1m and went up as the 16cpu’s were used the overall results would be massively higher than the current machines which is what we expected with the more powefull CPUs.

We’ve tried with hyper-threading on and off and made little difference (the hyper threaded result is the orange line which mirrors the lilac one but just a bit below)

Have any of you encountered anything similar? Are we missing something in our setup that would cause this inverse graph line? Linux flavour is Red Hat. Same number of CPU in HP machines as Dell server.

I would appreciate any words of wisdom on the subject please either to understand the cause of the inverse results or to point at what can be tweaked to improve the results I am getting.

Thanks in advance

Paul

Were in the process of evaluating a platform switch from HPUX on Itanium chip set to Linux64 on Dell Intel hardware. Ive used readprobe consistently over the last 20 years to compare the read potential of one machine against another and am getting some unexpected results from readprobe against the Linux platform.

The graph below shows a line for every progress database server we have had back to 2000 when we first started using them.

The red and green lines at the top of the graph are the two main current HP machines we have. The profile of the line produced from these is similar to that of all previous machines tested in that the number of reads increases steeply as each of the cpu’s is utilised (ie one user can only use one cpu whereas two users can use two cpu etc) when all cpu’s have users on them the line flattens out as the processes all vie for cpu time with the sign of a good machine (for db reads anyway) being that the reads don’t drop to far from the max as the number of users ramps up.

The lilac line starting at 1m reads is the new Dell PER740 and as you can see has the reverse profile which is baffling me a bit. It starts with one user being able to read 1m records per second which is excellent but then nose dives as the number of users increases. Its almost as if whatever component is handling the switching between cpu’s of the processes is the bottle neck and not working in an efficient way. Clearly if it followed the same profile as the others and started at 1m and went up as the 16cpu’s were used the overall results would be massively higher than the current machines which is what we expected with the more powefull CPUs.

We’ve tried with hyper-threading on and off and made little difference (the hyper threaded result is the orange line which mirrors the lilac one but just a bit below)

Have any of you encountered anything similar? Are we missing something in our setup that would cause this inverse graph line? Linux flavour is Red Hat. Same number of CPU in HP machines as Dell server.

I would appreciate any words of wisdom on the subject please either to understand the cause of the inverse results or to point at what can be tweaked to improve the results I am getting.

Thanks in advance

Paul